and Education

Week - GlassLab Opens Opportunity for Education-Game Makers

In summer 2012, the Bill & Melinda Gates

Foundation, in cooperation with the MacArthur Foundation, made a significant

investment to establish the Games Learning and Assessment Lab (GlassLab), which includes top game

developers, learning scientists, assessment designers and researchers from

multiple fields and disciplines, housed at Electronic Arts and Co-Lab (Zynga).

The program was divided into two teams to

mitigate conflict of interest and guarantee independent validation of

assessments developed by the program.

The programming and development group (GlassLab)

was tasked to design and develop state-of-the-art, game-based formative

assessments. These assessments are being developed in response to the climate

of student disengagement that currently exists in many classrooms. By

leveraging the popularity of digital video games and by applying Evidence

Centered Design (ECD), the game-based formative assessments address the needs

of both students and teachers for reliable and valid real-time actionable data

within a motivating learning environment. This work is being conducted by the

Institute of Play, the Educational Testing Service (ETS), Pearson, Inc., Analytics

and Adaptive Learning, Electronic Arts (EA), and the Entertainment Software

Association (ESA).

Concurrently, the Foundation tasked the SRI-led research team (GlassLab-Research)

to independently conduct research on the qualities, features, inferential

validity, reliability, and effectiveness of the assessments that were embedded

within the GlassLab game products. The GlassLab-Research work is being

conducted by experts in assessment, learning sciences, science education, and

learning technology at SRI with the support of external consultants.

Last month GlassLab announced that it is

moving to provide free assessment and analytics technology to third-party

digital learning game developers, including an initial cohort of five groups

beginning this fall.

The goal is to help those developers more

efficiently capture the torrents of data generated from student game play,

process that information for signs that students are mastering academic

standards, and display the results to students, teachers, and others via

easy-to-use dashboards.

Over 100 groups—including research organizations,

small startups, established commercial players, and more—submitted applications

to be part of the initial cohort of developers with whom GlassLab will

partner, according to Executive Director Jessica Lindl.

Among other things, the groups will receive

computer code that integrates into their existing games to help collect data

and access to an Assessment Engine that processes that data against key

academic standards.

Lindl said that GlassLab assessment

experts from Pearson and ETS will also work directly with the third-party

developers to figure out how the data generated by their games connect to academic

standards.

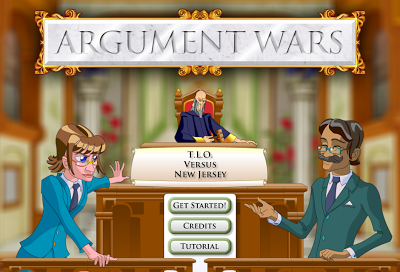

The model for the new partnerships will be a

recently completed pilot effort involving GlassLab and Washington-based iCivics,

a nonprofit that develops web-based learning games such as Argument Wars,

meant to help students learn the skills of evidence-based persuasive

argumentation, which happens to be a key piece of the new Common Core State

Standards (please find also Serious Games Boost Civic Education In The Classroom and iCivics 2013 Report: Serious Games Reaching 35,000 Educators Across 9,000 Schools).

Through the partnership with GlassLab, iCivics

has been able to generate a much more robust portrait than was previously

possible of what students are learning in Argument Wars.

In addition to knowing if a student won the

game and was engaged while playing, iCivics can now see a portrait of

the reasoning strategies and other mental processes used by the student. That

information is aligned to academic standards and fed back to teachers to help

them know what type of instructional help each student needs next.

"Because GlassLab has its own

argumentation game (Mars Generation One: Argubot Academy, currently in

beta-testing and expected to be publicly released this August), researchers

were able to do sophisticated reliability and validity testing”, Lindl said

(Please find also ELA Serious Games Infused With Stealth STEM Content).

Mars Generation One: Argubot Academy, offers an example of how that technology works.

In the game, players—typically middle school

students—find themselves in a settlement on Mars where disputes are resolved

through formal arguments. To succeed, players must search for evidence that can

support the claims they are trying to make. They also must critique others'

arguments, determining whether the evidence presented supports the claim

effectively. Players advance by winning argument "duels" against

opponents.

“Over the course of a complete 90-minute cycle

of game play, players might engage in eight or more such duels and be asked to

critique 20 claim-evidence pairings”, said Seth M. Corrigan, a research

scientist for learning analytics with GlassLab.

The tools that the group has developed are

used to gather, organize, and analyze the resulting data to create

a model of what students know and have learned. First, the types of telemetry data generated

by players' clicks and other in-game decisions deemed relevant through

extensive analysis are located and stored in secure, custom-built databases.

Then, that information is fed into an "assessment engine" that

determines what students' game-play patterns reveal about their mastery of

three specific common-core standards related to argumentation and reading

informational texts. Finally, those results are reported back to students,

teachers, parents, and others by digital dashboards.

As part of its new effort, GlassLab is

also preparing to introduce a website that teachers will be able to access

directly to find games and related instructional materials, and monitor

students' progress.